@InProceedings{Lin_2023_ICCV_SSLmirror,

author = {Lin, Jiaying and Lau, Rynson W.H.},

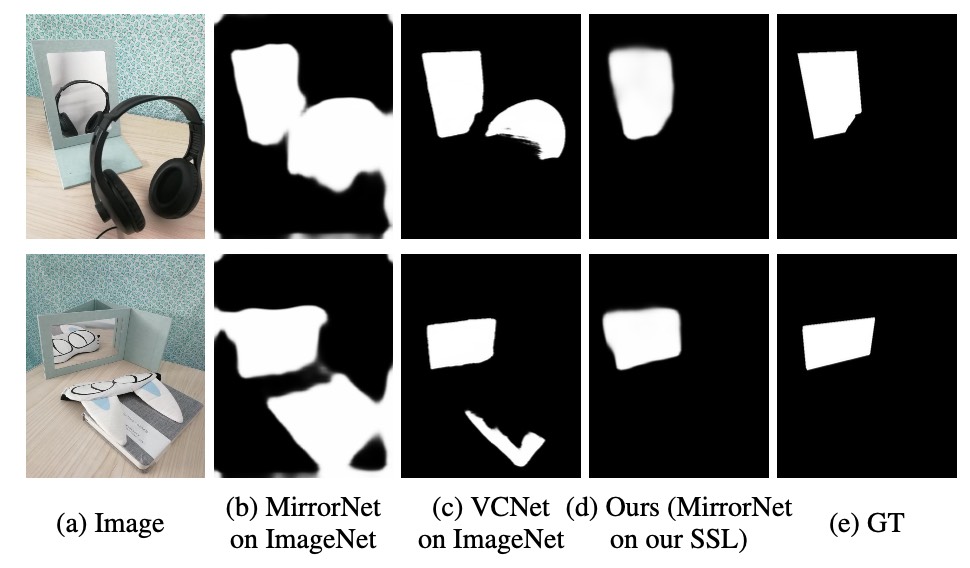

title = {Self-supervised Pre-training for Mirror Detection},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV)},

month = {October},

year = {2023},

}